I have recently been working on privacy protection for structured data and have shifted my focus toward unstructured data security. Initially, I assumed the difference would be negligible; however, while the data themselves may share similarities, the detection and security methodologies differ significantly. I am currently working on designing a data-centric security procedure that can handle both formats simultaneously. Since this aligns closely with current industry shifts, I’ve decided to document my thoughts here.

Table of Contents

Introduction: The Evolution of AI Infrastructure and Shifting Data Risks

As of 2026, Artificial Intelligence (AI) has moved beyond being a mere productivity tool to become a strategic asset that dictates national and corporate economic competitiveness. While previous digital transitions focused on managing structured databases, the modern AI era represents an evolution toward “agentic infrastructure” that generates real-time value from unstructured data, including text, images, and voice.

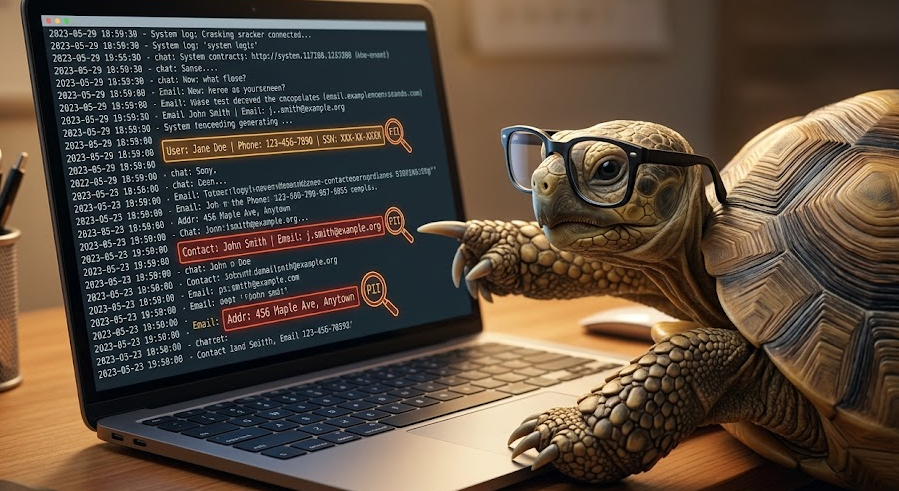

However, this rapid technological leap carries critical risks, specifically the unintended exposure of Personally Identifiable Information (PII) within these unstructured formats. While traditional security focused on deterministic code defects, today’s landscape requires advanced governance to intelligently identify and block sensitive information within massive unstructured datasets processed by AI.

Effective AI security must reflect the probabilistic nature of how models consume data and generate results. Utilizing low-quality or “poisoned” data containing sensitive information for training or Retrieval-Augmented Generation (RAG) systems leads to unreliable outputs that are difficult to rectify. This distortion in decision-making results in a diminished return on investment (ROI). This report analyzes the technical architecture and policy implementation of PII detection and real-time filtering as an essential strategy to mitigate these risks.

Technical Limitations and the New Paradigm of Unstructured Data Security

Traditional security methods, such as simple keyword searches or Regular Expression (Regex) based systems, face clear limitations in protecting complex unstructured data security. While Regex can easily detect structured patterns like Social Security numbers, it frequently fails to identify names, specific job titles, or sensitive data hidden within a conversational context.

The Introduction of Intelligent PII Detection Architecture

To overcome these hurdles, 2026-era security solutions leverage Transformer-based Natural Language Processing (NLP) models. These AI-driven filtering technologies do not simply look at text strings; they understand context to detect information.

- Context-Aware Detection: Beyond recognizing address patterns, these systems identify sensitive information inferred through job titles or specific ways individuals are referenced within sentences.

- Multimodal Capabilities: These solutions extend beyond text, utilizing Optical Character Recognition (OCR) to identify and automatically mask sensitive information within images.

This intelligent detection serves as a real-time surveillance network during deployment, minimizing the risk of privacy failure when AI agents collect external data or generate responses.

Technical Implementation: Microsoft Presidio and Intelligent Engines

One of the most widely utilized frameworks for unstructured data security is the open-source Microsoft Presidio. Designed for AI agents and LLM environments, it operates through two core engines:

1. Analyzer Engine: Precise Identification and Confidence Scoring

The Analyzer goes beyond simple filtering by using a multi-faceted verification process:

- Multiple Detection Logics: It combines Regex, Named Entity Recognition (NER), and checksum algorithms to pinpoint the location and type of PII.

- Confidence Scores: The AI assigns a score based on its certainty of detection. Security administrators can set policies to handle data above specific thresholds, increasing accuracy while preventing operational inefficiencies caused by false positives.

2. Anonymizer Engine: Enforcing De-identification

This engine manipulates or protects data based on the Analyzer’s findings:

- Diverse Operations: It supports various de-identification methods, including masking, encryption, hashing, and redaction.

- Reversible Transformation: By applying AES encryption, the system allows authorized administrators to decrypt and view the original data if necessary, providing operational flexibility.

Personal Note: In my experience, while these tools perform exceptionally well in English-speaking contexts, they have historically struggled with higher false-positive rates in other languages. However, given the scale of development by major tech firms, the output quality is expected to improve significantly.

Strategic Scenarios for Essential Real-time Filtering

Handling PII in unstructured data security requires more than just deletion. Policy decisions must determine the appropriate filtering operation based on the data’s purpose and required security level.

| Filtering Operation | Technical Description | Optimal Use Case |

| Replace | Replaces PII with an entity type (e.g., <PERSON>) | Maintaining context and semantic structure for LLM prompts |

| Redact | Completely deletes the information | High-security internal reports or final summaries |

| Mask | Obscures parts of the data (e.g., *) | Saving chat logs in support centers while maintaining readability |

| Hash | Uses SHA256 to maintain uniqueness | Identifying individuals for statistical analysis while protecting names |

| Encrypt | Reversible transformation via crypto-keys | Management tools where admins may need to verify originals |

These techniques synergize with Privacy-Enhancing Technologies (PETs) used during development to create a robust Data Lineage from generation to consumption.

Global AI Governance and South Korea’s Policy Framework

As of 2025, the South Korean government and the Personal Information Protection Commission (PIPC) have introduced specific legislative frameworks that align with global standards like the GDPR.

Major Policies and Innovative Special Systems

The government balances innovation with privacy through several key initiatives:

- Prior Adequacy Review System: A flexible system where companies collaborate with the government on compliance plans during AI development to potentially receive exemptions from administrative fines.

- Pseudonymous Data Utilization: The “Personal Information Innovation Zone” allows the use of original data for essential innovations, such as autonomous driving, within a secure environment.

- Risk Assessment Models: Standardized models allow companies to pre-assess risks based on specific AI use cases, enhancing autonomous security.

Under these frameworks, companies must notify users of potential AI hallucinations and ensure human intervention in final decision-making, following the “Responsible AI Governance” principles.

Conclusion: Strategic Recommendations for Trustworthy AI

Preventing data leaks in AI development is a complex economic and policy challenge that requires a total redesign of corporate governance. While de-identification (PET) is the shield for the training phase, real-time PII filtering is the surveillance network for deployment.

Ultimately, only Trustworthy AI—backed by a systematic data governance policy—will survive in the market. Organizations must map their data lineage and utilize regulatory sandboxes, such as the Prior Adequacy Review, to resolve legal uncertainties. Ensuring that AI operates safely through these policy designs is becoming a core competitive advantage in the global market.